This article concerns the use of deep learning techniques in predicting stock prices. This was the topic of Vittorio Cecchetto: “Deep Learning for natural language analysis in the field of stock market movement prediction.”

The prediction of a stock price, in the financial sector, can be computed through two different approaches. The first approach, called fundamental analysis, is based on the financial strength and profitability of a company to determine its intrinsic value. This involves estimating the present value and future projections to assess its growth or decline.

The second approach, called technical analysis, is instead based on the study of the stock price behavior over time. Disregarding company-specific information, the focus is only on the stock price, assuming that the price time series encapsulates the present and future of the company, at least in the short term.

Standard technical analysis starts from stock price time series and, through indicators, mathematical and statistical methods, aims to identify time intervals in which the stock price is overestimated or underestimated, suggesting the sale or purchase of shares of that company. In recent years, technical analysis has found a new ally: artificial intelligence (AI). The predictive capabilities of state-of-the-art AI models are indeed able to forecast the stock’s performance continuously (not only at specific moments in history), having a high degree of accuracy.

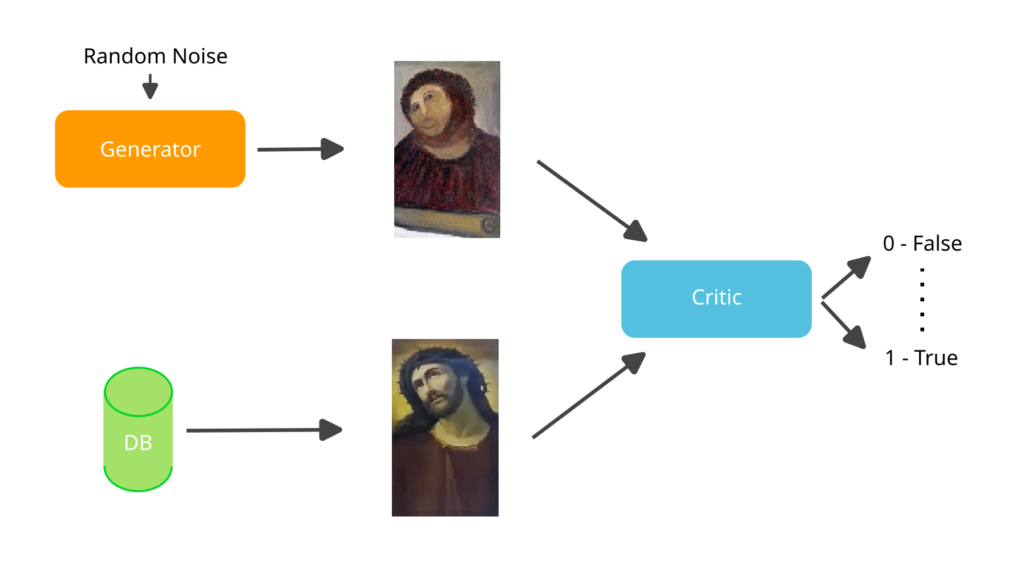

One of the most recent models was first proposed in 2017 [1] and is called Wasserstein Generative Adversarial Network (WGAN). In order to give an intuitive idea of how it works (see Figure 1), let’s suppose we have a set of images of paintings by a specific artist. A WGAN consists of two AIs: one tries to generate images similar to the artist’s paintings, while the other plays the role of an art critic and determines whether a presented image is actually an original painting or a fake. While training, the AI generating the paintings becomes increasingly skilled at imitating the artist’s style, while the AI acting as the art critic becomes more skilled in detecting the fakes.

Figure 1: Working principle of a Generative Adversarial Network (GAN)

In the master’s thesis, a WGAN-based AI was applied to predict the Apple stock price. Within the WGAN framework, one AI was tasked with generating the next day’s price based on the information from the previous three days, while the second AI had the role of determining the plausibility of the price proposed by the first AI.

To train the neural networks, we used the Apple stock price over a 10-year time window. Additionally, we incorporated the price movements of other big-tech companies (Google, Facebook, Amazon, Microsoft), some commodities (Gold, Oil), and several stock market indices (S&P500, Nasdaq, Dow Jones, NYSE, FTSE100, Hang Seng, Nikkei, SSE, S&P BSE, Euro Stoxx 50, Euronext 100). To further enrich the dataset, we included the sentiment related to financial news about Apple. These news articles were extracted from seekingalpha.com, a financial news website, using web scraping techniques. The extracted news were then processed with an AI model called FinBERT, which is based on a larger model known as BERT, developed by the Google team. FinBERT performs Sentiment Analysis and assigns a sentiment score indicating whether the news is positive, negative, or neutral.

The data was partially used to train the AI (80%), and once the training was completed, the remaining data (20%) was used to test the performances. The obtained results are shown in Figure 2, where the predicted price closely aligns with the actual price, yielding a mean squared error of 2.05.

Figure 2: Comparison between real and predicted price

The data used for training may appear to be a lot. Indeed, much larger amounts of data would be needed. For instance, one can envision using this technique in the world of High-Frequency Trading, where the available data is thousands of times bigger than that used here.

References:

[1] M. Arjovsky, S. Chintala, L. Bottou, Wasserstein Generative Adversarial Networks, Proceedings of the 34th International Conference on Machine Learning, vol. 70, pag. 214-223 (2017).